5 Ways To Monitor Your DynamoDB Tables Like A Pro

Monitor your tables for metrics such as costs, performance, latency and more.

Monitoring your DynamoDB tables regularly is much more than just about visualizing traffic patterns and data usage.

Monitoring empowers you to save money by identifying areas of improvement for right-sizing, as well as areas of latency to enhance your user’s experience on your platform.

By maintaining optimal performance, low latency you can manage costs easier.

However, there are hundreds of metrics you can choose from in DynamoDB and developers often don’t know where to start.

Instead of being overwhelmed with these metrics, you need to focus on the few that matter.

Here, I’ll outline the most important and relevant metrics in your DynamoDB tables and show you how to monitor them effectively.

1. Cost Monitoring

DynamoDB has a not-so-simple pricing model. But to understand it simply, you are charged based on RCUs and WCUs.

One RCU is a unit of reading data worth 4KBs and one WCU is a unit of writing data worth 1KB.

For the standard class, DynamoDB charges you about $0.00013 per RCU, and $0.00065 per WCU (quite cheap).

Monitoring your reads and writes are the basis of monitoring in DynamoDB.

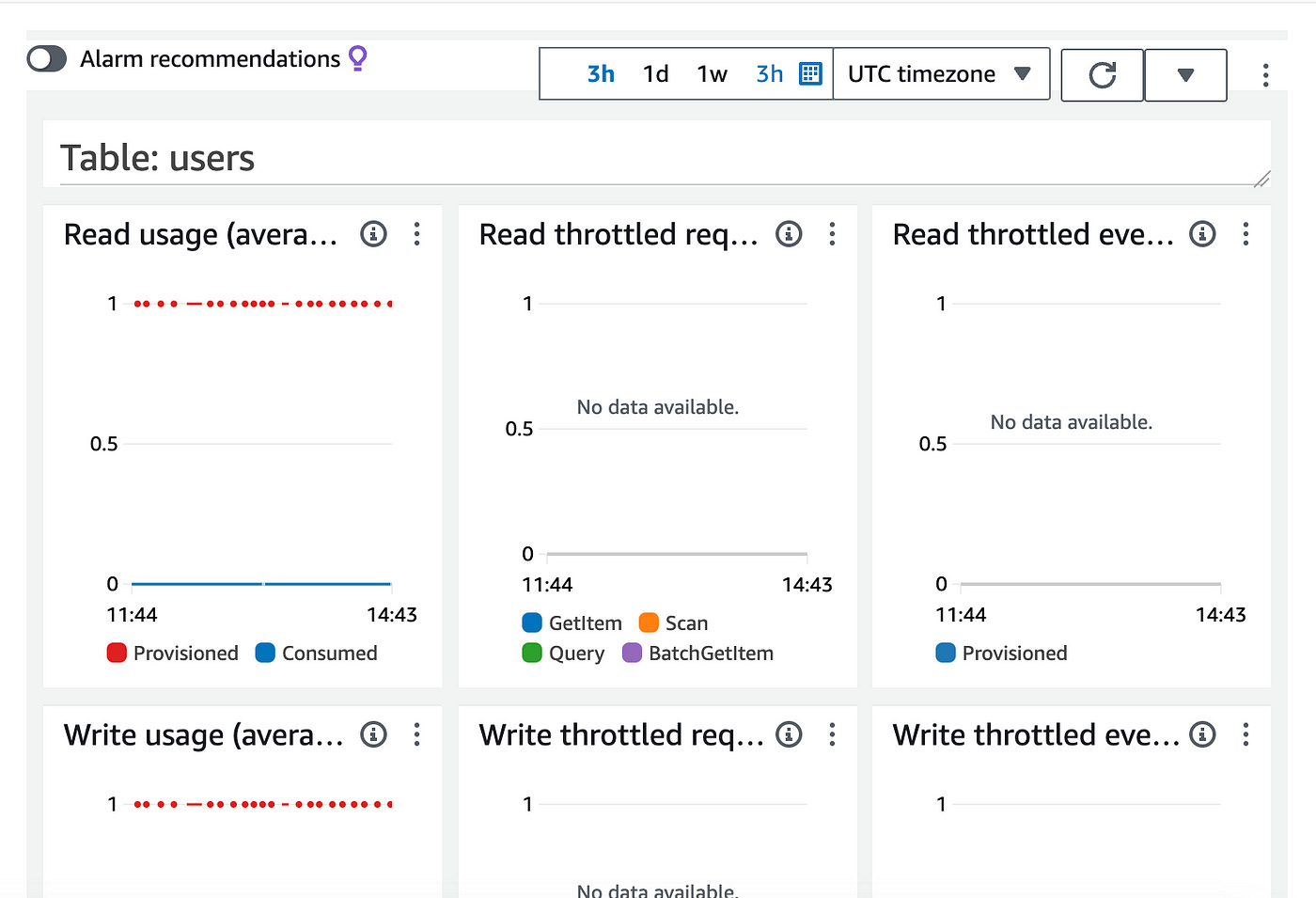

Head over to the Monitor section of your DynamoDB table to view the table’s metrics:

Below in the CloudWatch metrics section you’ll see some graphs.

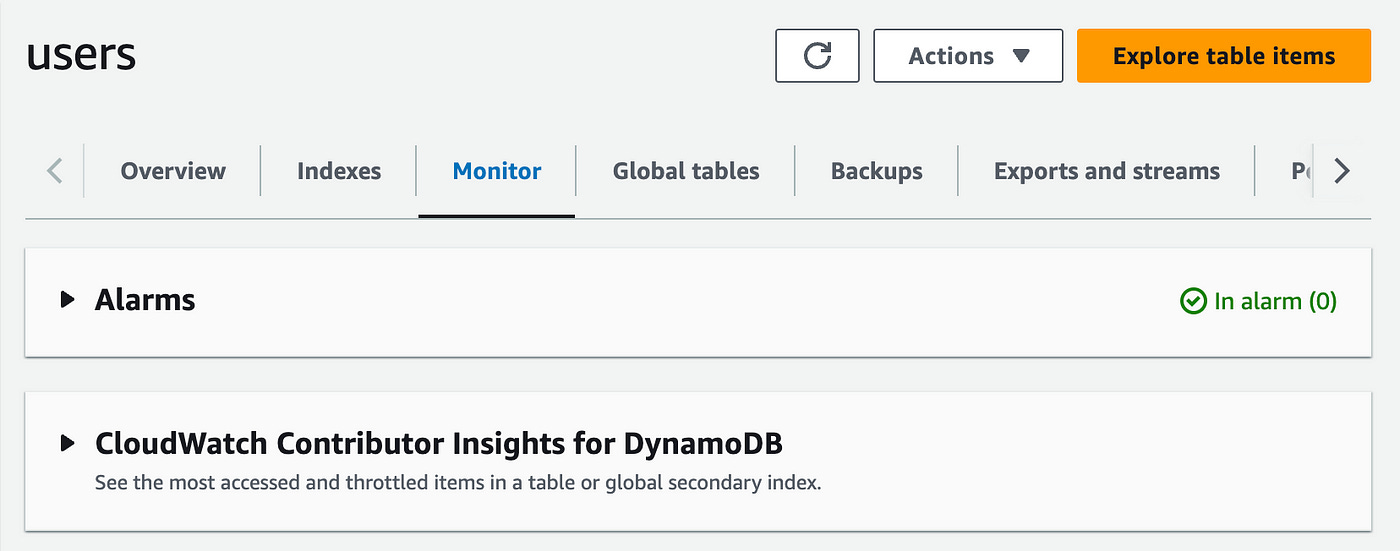

On the first container labeled “Read usage”, click on the 3 dots menu and on “enlarge”. You will see the large version of the graph.

Below you can see I consumed 1.7 read units (RCUs)

You can then setup alarms in CloudWatch to keep you informed when a tables RCUs or WCUs crosses a threshold you define.

This is crucial to maintaining costs at a level you expect and budget for.

Learn how to setup billing alarms for DynamoDB here.

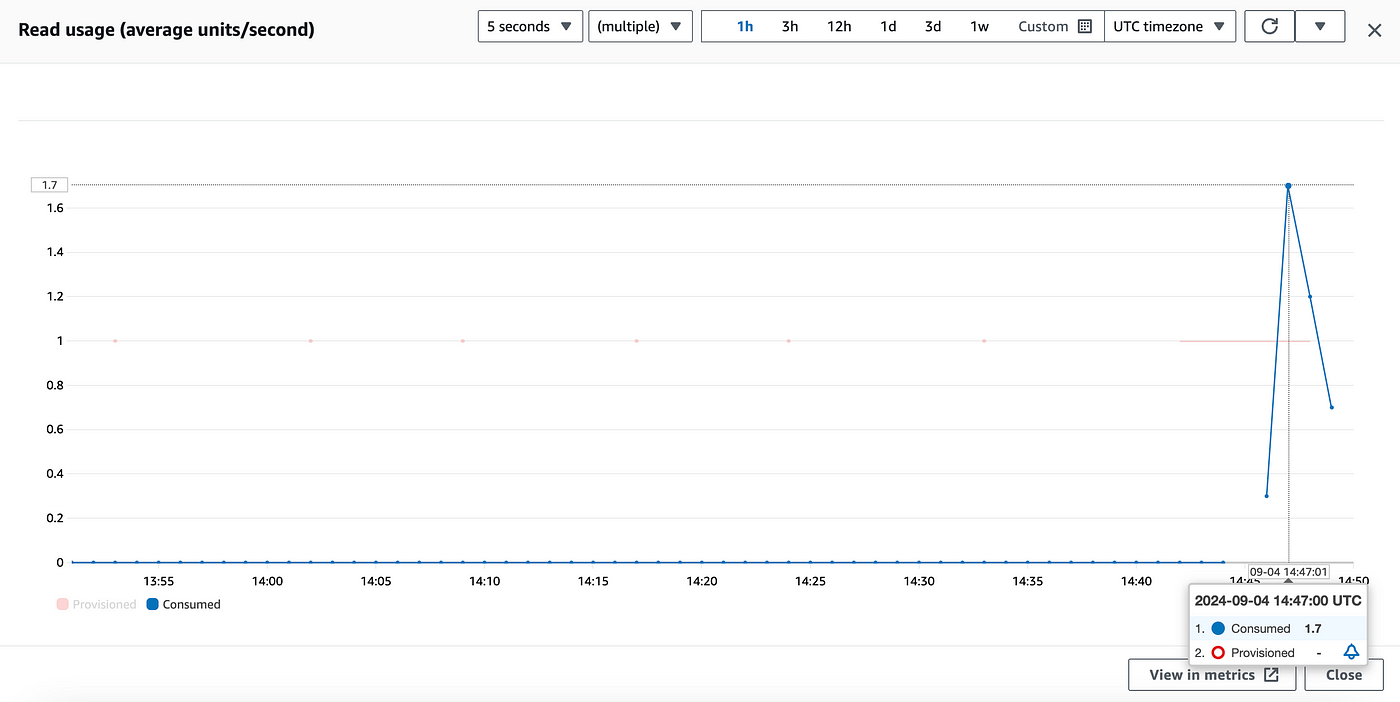

2. Performance Monitoring

Monitoring your performance, or latency to be precise is important to understand how fast your data is being served on your application.

This is usually the difference between a great and bad user experience.

In the Latency section below, you can visualize how fast or slow your data is being read/written.

On my example table, my get latency is peaking at 1.86ms and my writes at 3.84ms — quite phenomenal.

If I feel higher latencies for reads or writes on my application, this is the first place I would inspect. From here, I could see error metrics as well if any are causing data latencies (scrolling down to the Error section).

3. Throughput Monitoring

Provisioned throughput measures how much data your DynamoDB table can handle in terms of RCUs and WCUs.

It’s important to monitor this to avoid throttling and to ensure your application performs at optimal levels.

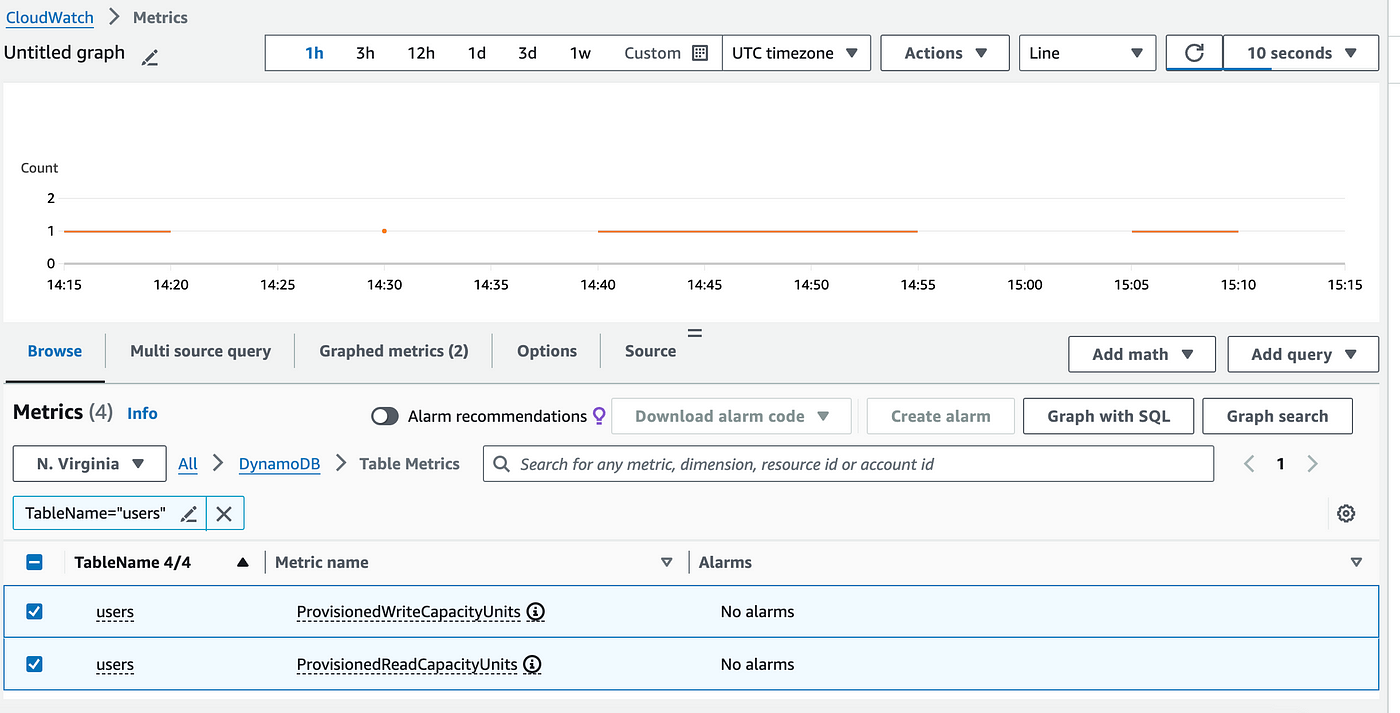

To monitor throughput you can use the ProvisionedReadCapacityUnits and ProvisionedWriteCapacityUnits metrics in CloudWatch.

For this you have to go into CloudWatch to view these metrics.

Click on the View all in CloudWatch button below.

In CloudWatch, you’ll be redirected automatically to your table’s metrics.

Search for the ProvisionedReadCapacityUnits to view throughput metrics for provisioned reads and writes on your table.

Here I’ve selected both ProvisionedReadCapacityUnits and ProvisionedWriteCapacityUnits to view their usage metrics:

As you can see my table is currently provisioning 1 RCU and 1 WCU for this table.

If you had dynamic provisioning or on-demand provisioning, viewing this metric would be really useful especially with high traffic usage to your app.

This would give you valuable insights on how many RCUs/WCUs are being consumed from/to your database, enabling you to learn how to right-size your database capacity accordingly.

4. Security Monitoring

Security monitoring is crucial to ensure your DynamoDB tables are encrypted and that your IAM policies are correctly configured to prevent unauthorized access.

If you’re using KMS keys, you can monitor the performance of your encryption keys.

If you’re using only IAM policies, regularly review these policies and use IAM Access Analyzer to find out if any user has too much permissions that they don’t strictly need.

You can follow this guide to learn how to use IAM Access Analyzer.

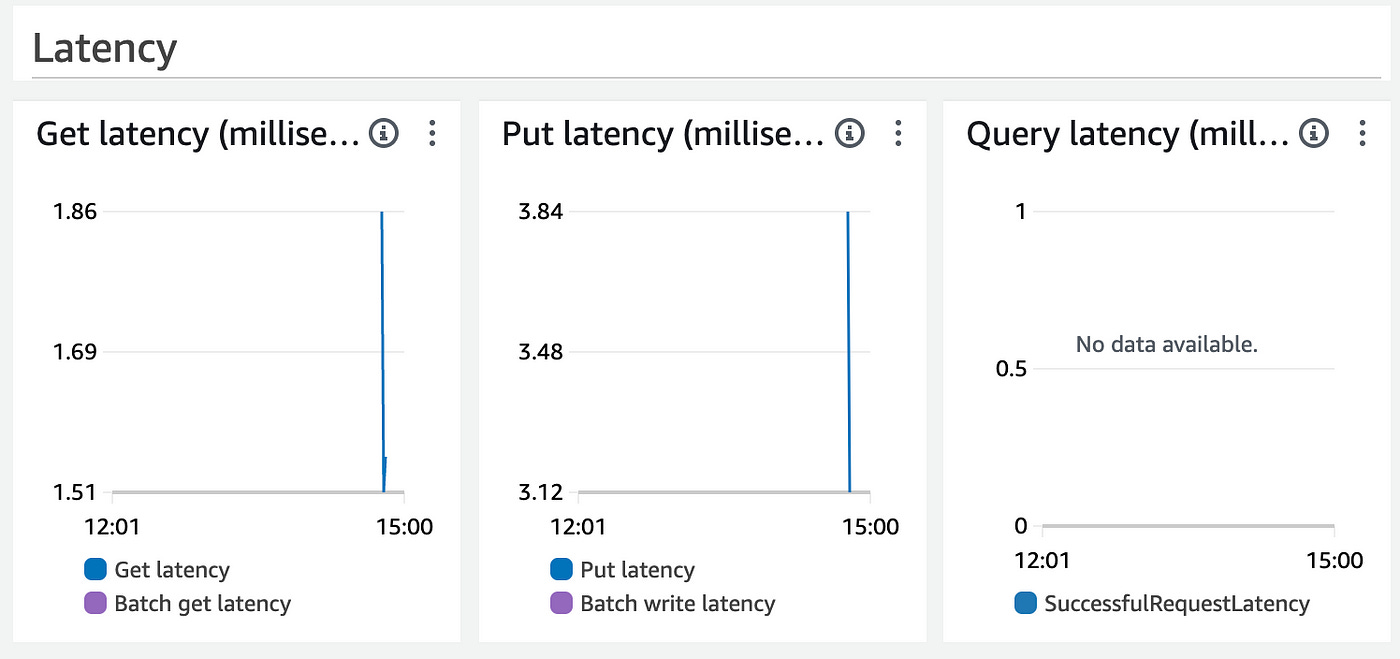

5. Alerts & Alarms

Setting up alerts and alarms in CloudWatch is one of the most effective methods of proactively monitoring of your DynamoDB tables and associated costs.

You can setup alarms for key metrics like RCUs, WCUs, latency, costs and they will notify you when something goes wrong or crosses a threshold.

You can then use CloudWatch alarms to trigger automated actions like scaling up provisioned capacity on your DynamoDB table or sending a notification to a DevOps team.

You can also use Lambda functions as automation.

For instance, when a DynamoDB change event occurs — with streams — you can trigger a Lambda function to process the event.

In the Lambda function code, you can adjust throughput capacity, or modify table items accordingly.

Conclusion

Monitoring DynamoDB tables effectively requires first understanding how to use and read integrated CloudWatch metrics.

By keeping an eye on key metrics like costs, latency, read/write usage and capacity, you can ensure your DynamoDB tables remain efficient and cost-effective.

With the right monitoring strategy, you not only optimize your database usage and costs but create more resilient and scalable applications.

👋 My name is Uriel Bitton and I’m committed to helping you master Serverless, Cloud Computing, and AWS.

🚀 If you want to learn how to build serverless, scalable, and resilient applications, you can also follow me on Linkedin for valuable daily posts.

Thanks for reading and see you in the next one!