How A Simple Serverless Error Almost Cost Us Hundreds Of Dollars

And how I prevented this from happening and what it taught us.

Ionce worked at a job as an AWS developer to design the database and APIs of a client’s application.

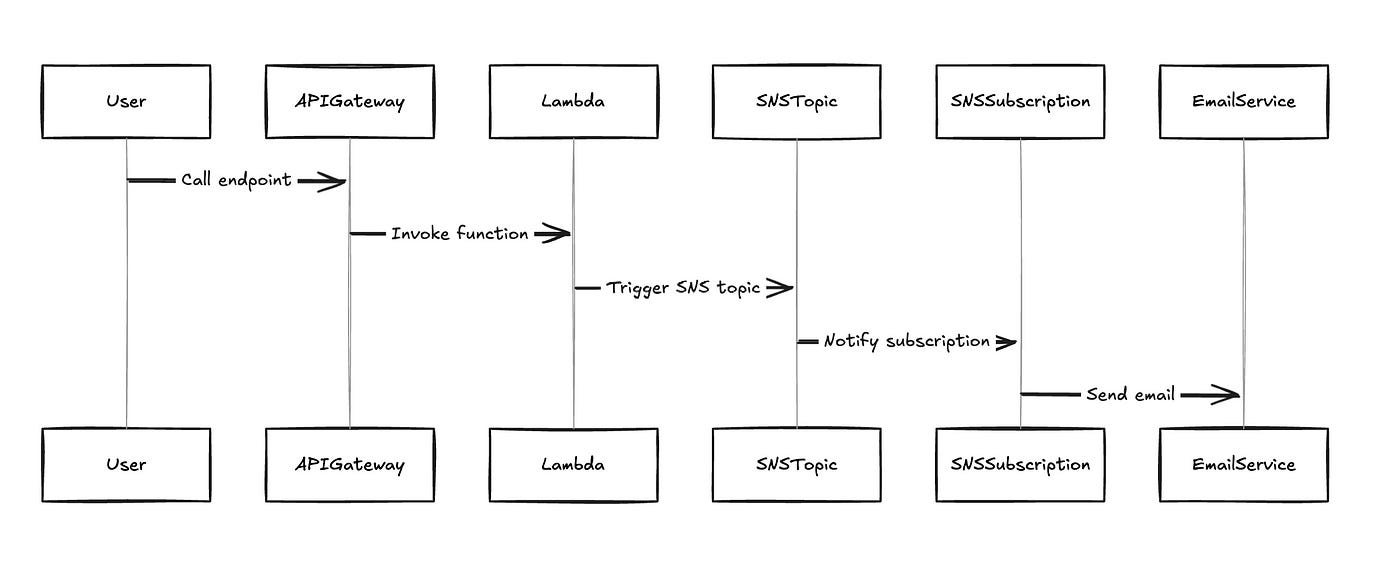

Part of a team of 4, we designed a microservice responsible for sending out emails on an application form.

Long story short, we had a Lambda function trigger an SNS topic which in turn triggered a subscription to send emails to the applicant as well as to us (in the development environment).

When I got around to testing this function, I noticed a very strange behavior.

The email client on my machine kept pinging with new emails.

It started with one email, then 10, 100 and within a few minutes, I had received around 200 emails.

All with the same message about the test template we wrote.

The worst part was that the emails didn’t stop. Instead, they were actually increasing in frequency.

At the start, we were getting a few emails a minute, but after several minutes, we were getting several emails per second.

At this rate, if we left the issue unchecked, we would have sent out 100K emails in a couple of hours.

And if anyone else happened to test the function out at the same time, that number would have risen to an even higher number.

I wouldn’t have wanted to imagine the costs associated with this.

But if I did, it would be somewhere north of 100$, after a few hours.

And thousands of dollars if the function was left for days.

Imagine deploying Friday afternoon and fixing it on Monday morning!

But before emails could rise higher than about 20K, I dove into the Lambda function code.

I immediately noticed there was no error handling. There should have been a try-and-catch block wrapping all of the code.

The catch block would have caught the error and prevented this issue.

But to understand how and why, we must first understand how Lambda and SNS work.

After having identified and fixed the issue here were my main takeaways and lessons.

How This Happened?

When a Lambda function is invoked to send an email via SNS, it triggers an SNS topic.

If the Lambda function encounters an error after sending the message to SNS but before completing execution, the function will still be retried.

A Lambda function can auto-retry up to 3 times by default.

In the case of SNS, here’s what the official docs state about SNS retries:

“If Lambda is not available, SNS will retry 2 times at 1 seconds apart, then 10 times exponentially backing off from 1 seconds to 20 minutes and finally 38 times every 20 minutes for a total 50 attempts over more than 13 hours before the message is discarded from SNS”.

This could result in many SNS executions and explains the almost infinite loop of emails.

Why This Happened?

The reason this happened was due to 2 reasons:

The fact the Lambda function ran into an uncaught error caused Lambda to auto-retry a few more times.

Simultaneously, SNS is also retrying to retrigger the subscription that sends an email. Since the Lambda function is “unavailable” it will retry sending the email 10 times every second resulting in a mass amount of emails being sent out after a while.

SNS processes messages into a queue data structure. This means instead of satisfying every request at the same time, it enqueues messages and executes them one at a time.

This also explains why emails kept coming in even when no Lambda functions had been executed in a while.

How Could We Have Avoided This?

Error Handling

This was the way I resolved the problem from happening again.

By adding necessary error handling (try-catch blocks) in all the necessary places, the function exited on any error.

If the Lambda function had gracefully handled errors from the start, it would not have retried itself. This would make it available to SNS, and SNS would not be retrying messages.

To fix the issue, after the fact, all I did was delete the SNS topic and the emails stopped a few hours later (many were still in the queue).

Dead Letter Queue

In the case where a Lambda function is retrying itself an indefinite number of times, it is often useful to configure a dead letter queue.

This is also a possible preventative measure.

The dead letter queue will prevent the function from retrying itself more than a few times.

After a set number of times (the defined maximum retry attempts) the function is sent to the dead letter queue and not retried again.

Conclusion

Running into issues is the best way to learn, especially when costs are at stake. The biggest problems often teach us the greatest lessons.

In our case, we learned that mechanisms such as error handling and dead-letter queues proved to be critical when dealing with Lambda.

Robust error-handling code is a must and could save a lot of time and money.

👋 My name is Uriel Bitton and I’m committed to helping you master Serverless, Cloud Computing, and AWS.

🚀 If you want to learn how to build serverless, scalable, and resilient applications, you can also follow me on Linkedin for valuable daily posts.

Thanks for reading and see you in the next one!