How I Built A Custom Email Newsletter App

How I built it to be highly scalable on top of several AWS services.

Building an email newsletter system is not as hard as it may seem at first.

I recently needed to build this for a client's blog website.

My client didn’t like the idea of paying a few hundred dollars a month for a solution like Beehiiv or ConvertKit.

My work would charge him a higher upfront cost, but once it was built he wouldn’t be paying monthly for the next 10–20 years.

I approached building this platform in the simplest way.

I made use of a few AWS services only.

Building The Newsletter Platform

Overview

Here’s the general overview of how I built the platform:

I stored the email subscribers on Amazon DynamoDB

I created 2 AWS Lambda functions to handle queueing and sending out emails.

I used Amazon SES to let Lambda send out emails in bulk

I used Amazon SQS to enqueue bulk email jobs

I created a Next JS frontend to publish the newsletter posts to subscribers

Storing Email Subscribers In DynamoDB

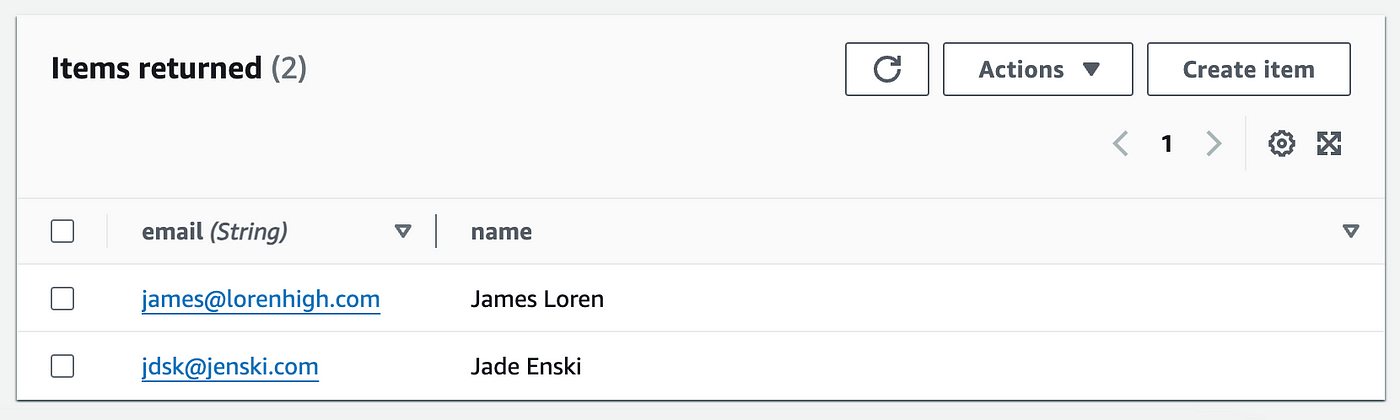

The first step in this newsletter app was storing subscribers’ emails inside a DynamoDB table.

I knew the costs for storing small items like a name and an email would keep costs negligible.

On the website landing page, I added a subscription form.

Every time a user entered their email address, that email would be stored (optionally with their name) in the “subscribers” table in DynamoDB.

I made sure no emails were duplicated by using condition expressions.

Using DynamoDB Streams, a new email item would trigger an AWS Lambda function to send an email to this user asking them to confirm their subscription.

When they confirmed, that action would trigger a Lambda function that would add an “isConfirmed” attribute to the item in DynamoDB.

To optimize costs here I limited each item to only an email (the partition key) and optionally a name for email personalizations.

According to my cost estimations, scanning:

5,000 email subscribers would cost less than a penny, multiplied by 4 (4 weeks a month), would bring that up to half a cent a month.

100,000 subscribers: according to the same calculation would cost a few pennies a month.

1 million subscribers: this would come up to around 10 cents a month.

As you can see, the costs here were negligible.

Using SES to send out emails in bulk

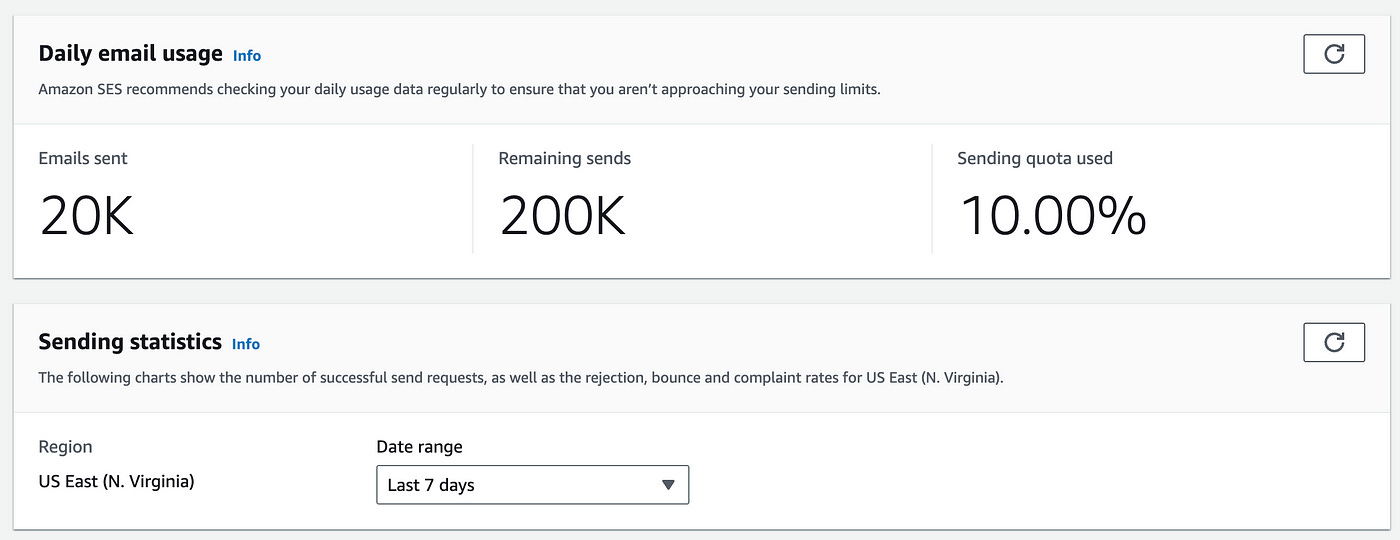

The next step I took was setting up Amazon SES (simple email service) and configuring it out of the sandbox mode.

To do this I verified the domain and added the required DNS records in my hosting provider. (all of these steps are well explained in SES).

I then created an email template to allow my client to send out newsletter blog posts.

Once it was setup I was able to use AWS Lambda to send emails in bulk.

Creating the Lambda function to send emails

The first thing I did was provide permissions for Lambda to communicate with Amazon SES and SQS (a messaging queue service).

I created an IAM role with read/write permissions for the Lambda function to access SES and SQS. (Follow this article to learn how to do this).

To prevent overloading the SES service and not reaching sending limits, I used Amazon SQS (simple queue service) to help queue email sending one batch at a time.

A batch consisted of 20 emails — I made it easy to increase that number for more scalability in the future.

I created 2 Lambda functions that would be responsible for sending out emails.

Here’s a quick overview of what each did:

Function 1: This code is triggered when an article is published. It gets the emails from DynamoDB and sends them to SQS in batches of 20.

Function 2: This code is triggered by the SQS queue. It processes each batch and sends the emails using SES.

Here’s the code for function 1:

import { DynamoDBClient, ScanCommand } from "@aws-sdk/client-dynamodb";

import { SQSClient, SendMessageBatchCommand } from "@aws-sdk/client-sqs";

const dbClient = new DynamoDBClient({});

const sqsClient = new SQSClient({});

const queueUrl = process.env.SQS_QUEUE_URL;

const BATCH_SIZE = 20;

export const handler = async (event) => {

try {

const { Items } = await dbClient.send(new ScanCommand({ TableName: "subscribers" }));

const emailAddresses = Items.map(item => item.email.S);

for (let i = 0; i < emailAddresses.length; i += BATCH_SIZE) {

const emailBatch = emailAddresses.slice(i, i + BATCH_SIZE);

const sqsBatch = emailBatch.map((email, index) => ({

messageID: email,

MessageBody: JSON.stringify({ email }),

}));

await sqsClient.send(new SendMessageBatchCommand({

QueueUrl: queueUrl,

Entries: sqsBatch

}));

}

return { statusCode: 200, body: 'Emails enqueued successfully' };

} catch (error) {

console.error(error);

return { statusCode: 500, body: 'Error enqueuing emails' };

}

};The code above scans the DynamoDB table and extracts the email addresses. It then loops through each of the 20 emails and sends them to the SQS queue.

The code for function 2:

import { SESClient, SendBulkTemplatedEmailCommand } from "@aws-sdk/client-ses";

import { SQSClient, DeleteMessageBatchCommand } from "@aws-sdk/client-sqs";

const sesClient = new SESClient({});

const sqsClient = new SQSClient({});

const templateName = "email_newsletter_template";

const sourceEmail = "urielas1@gmail.com";

export const handler = async (event) => {

try {

const messages = event.Records;

const destinations = messages.map((record) => {

const { email } = JSON.parse(record.body);

return {

Destination: { ToAddresses: [email] },

ReplacementTemplateData: JSON.stringify({})

};

});

const sendCommand = new SendBulkTemplatedEmailCommand({

Source: sourceEmail,

Template: templateName,

Destinations: destinations,

});

await sesClient.send(sendCommand);

const deleteParams = {

QueueUrl: process.env.SQS_QUEUE_URL,

Entries: messages.map((msg) => ({

messageID: msg.messageID,

ReceiptHandle: msg.receiptHandle

}))

};

await sqsClient.send(new DeleteMessageBatchCommand(deleteParams));

return { statusCode: 200, body: 'Emails sent successfully' };

} catch (error) {

console.error(error);

return { statusCode: 500, body: 'Error sending emails' };

}

};This second function which was integrated with SQS to receive events from the message queue, was only triggered by an SQS message.

This function processes the messages in the queue and sends out bulk emails with SES based on each email in the queue.

For the first function, I set up and configured a function URL to let the function be directly invoked from the frontend.

Using SQS to queue bulk email jobs

I then set up the SQS queue in the AWS console.

I created a queue that I called EmailNewsletterQueue.

I used the standard queue type and configured the message retention period to something short — 2 days only. For higher scalability I could always change this value to a longer time.

Publishing the newsletter to subscribers

The last step here was to build the newsletter publishing frontend.

For this, I used a Next JS app. The basic workflow was this:

On the admin backend I built a “Write” page where my client could write blog posts (I used the TipTap editor — a really cool library).

When he hit the “publish” button, the first Lambda function would be invoked using an Axios call.

The Lambda function URL allowed the call to go directly to Lambda without needing to setup an API Gateway.

Publishing an article would then trigger the workflow above, including scanning and extracting all emails from DynamoDB, looping through batches of 20 at a time, and sending out the blog post to each email subscriber.

Result

I ran several tests. I was able to create several temporary emails and test out the system I had built.

After a few adjustments, it functioned well. A blog post publish action would send out emails with no perceivable delay.

However, that delay is likely to change at scale in the case where there are hundreds of thousands of email subscribers.

Only time will tell — but for now I am quite satisfied with the system I built.

👋 My name is Uriel Bitton and I’m committed to helping you master Serverless, Cloud Computing, and AWS.

🚀 If you want to learn how to build serverless, scalable, and resilient applications, you can also follow me on Linkedin for valuable daily posts.

Thanks for reading and see you in the next one!